BM-K / KoMiniLM

Model's Last Updated: August 30 2023

feature-extraction

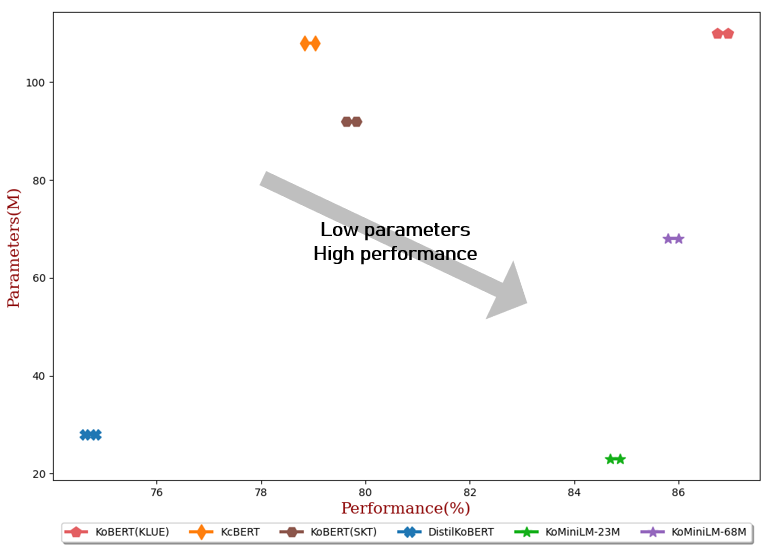

Introduction of KoMiniLM

Runs of BM-K KoMiniLM on huggingface.co

32

Total runs

-102

24-hour runs

-105

3-day runs

-106

7-day runs

-99

30-day runs

More Information About KoMiniLM huggingface.co Model

Url of KoMiniLM

KoMiniLM huggingface.co Url

Provider of KoMiniLM huggingface.co

Other API from BM-K

Total runs:

351.1K

Run Growth:

330.4K

Growth Rate:

94.09%

Total runs:

27.1K

Run Growth:

3.0K

Growth Rate:

10.70%

Total runs:

2.3K

Run Growth:

0

Growth Rate:

0.00%

Total runs:

2.3K

Run Growth:

0

Growth Rate:

0.00%

Total runs:

2.3K

Run Growth:

0

Growth Rate:

0.00%

Total runs:

2.3K

Run Growth:

0

Growth Rate:

0.00%

Total runs:

218

Run Growth:

21

Growth Rate:

9.42%

Total runs:

159

Run Growth:

154

Growth Rate:

96.25%

Total runs:

112

Run Growth:

107

Growth Rate:

95.54%

Total runs:

27

Run Growth:

16

Growth Rate:

59.26%

Total runs:

0

Run Growth:

0

Growth Rate:

0.00%